Current plan is to go ahead without the Oculus SDK’s clamping; worst case, we can compare against default Oculus renders.

This means the next step is finding scenes to display — I’m going to take a quick look at Unity and Unreal, while integrating with our in-house code.

Also, there’s a guy (Oliver Kreylos, of UC Davis and Vrui) who made a simulator of sorts for the Rift’s optics. Interesting for at least two reasons:

1. It might be useful to build something similar ourselves, to ease exploration and explanation.

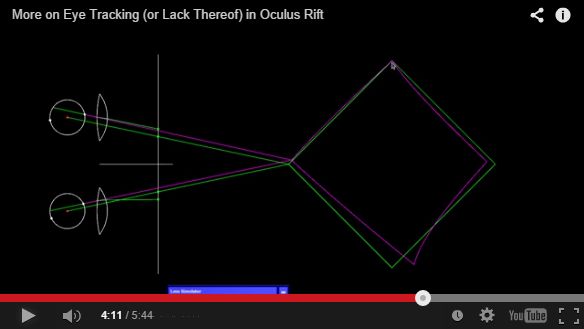

2. He’s really gung-ho about eye tracking, but concedes (to his blog commenters) that placing the virtual camera in the center of the eyeball (rather than an unknown pupil) is an okay approximation. It results in the point of focus being properly aligned, and the Rift’s lenses help to minimize off-focus distortion.

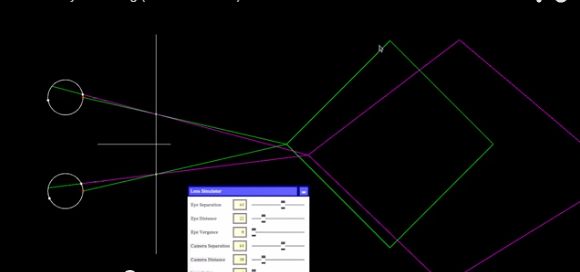

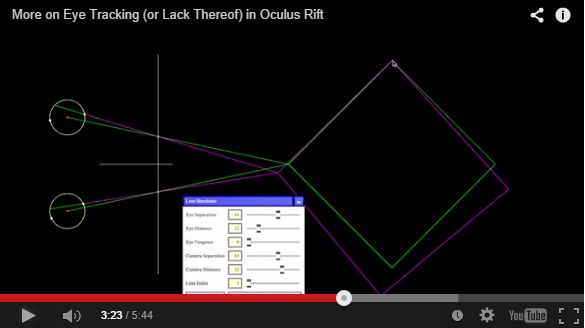

In the following pictures, the eyes are focused on the top corner of the diamond. Green is the actual shape and incoming light; purple is the perceived path of light and perceived shape.

Centered at “rest” pupil (and poorly calibrated?):

Centered in the eye, but no lenses:

Centered, with lenses:

His posts and videos here:

http://doc-ok.org/?p=756

http://doc-ok.org/?p=764

First link talks about the Rift in general (20 mins); the second link talks about centering the virtual camera within the eye (5 mins).