Describe the operation of your final project. What does it do and how does it work?

Our project was planned as a space elevator and time travel simulator. Users would start in the future in space. In operation it was a scientifically accurate high resolution model of the Earth with day/night cycles, topography, and a cloud layer. We presented the Earth model with accompanying information from one of our group members who ran the presentation. We gave a tour of the globe, noting points of interest and some information about population and development of the Earth. We also fielded technical questions during the presentation about the accuracy of the model and how it was created.

Describe each team-member’s role as well as contributions to the project.

Bryce: Wore the hats of Unity, coordinating group via email, and helped with the narration and interaction storyboards. Asset store acquisition and environment modeling. Created custom textures in Photoshop. Found and processed very large NASA images via Photoshop into a more friendly size for Unity. Setting up scene lighting and using emission textures for self and environmental illumination. Scripting for animation using linear and spherical interpolation. Adding CAVE scripts into project and building with settings worked out with Ross for the CAVE. Navigation meshes and agent scripting for more advanced animation and image display. Setting up agent and target groups and assigning our sorted category images to each group. Importing narration and adding background music to the project. Conducted testing and troubleshooting of project when running on the CAVE. Attempted a Oculus implementation of project, but no results of yet.

Liang: Developed and edited the narration and interaction storyboard. Explored academic resources for how to communicate science to the public, and summarized the scientific facts that would be related with each interaction spot in the form of images, pictures, and tables.

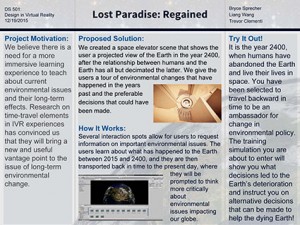

Trevor: Created visualizations of global change in ArcGIS with data from national sources on sea level change, deforestation, pollution, and population. Learned how to use Unity for the purposes of populating the scene with images and placing textures. Found images to draw in the user and create pathos for each interaction point. Designed “posters” to summarize and conceptualize each interaction point and its related choices. Recorded computer narration with Natural Voice, and edited and designed the sound to fit the aesthetic of the scene. Created and wrote copy for project poster and took care of weekly posts.

As a team, describe what are your feelings about your project? Are you happy, content, frustrated, etc.?

There is a certain undeniable frustration about our project. So much of it worked great on a normal computer system. Many of the early test scenes we worked with in the CAVE were promising, but when features began to be added more rapidly hard to track down errors occurred. Implementing scripts, textures, and shaders on a desktop system could not reliably expected to work on the CAVE. These types of errors were often impossible to foresee and often involved full overhauls of the feature instead of minor tweaks. This was furthermore compounded by occasional problems stemming from the hardware of the CAVE itself, eg. graphics card monitor issues and loss of stereo 3D on limited screens. This lead to a very slow progress in implementing features.

Having said that, the group effect of the CAVE and the large space to move around in were still massive upsides to the system. The showing of the high resolution accurate earth model went very well. Being able to see other’s reactions to the simulation was very informative in regards to learning what did and didn’t work for the project. Furthermore, one could hold group discussion in the simulation. From asking people to locate or guess about areas of interest on the globe to fielding technical questions about the system, the group dynamic facilitated by the CAVE was very unique. This as an excellent medium for lecturing groups on special topics using the VR as an enhanced visual aid, which is in a sense what our project became.

What were the largest hurdles you encountered in your project and how did you overcome these obstacles?

Certainly one of the biggest hurdles encountered was the CAVE system itself. The complex nature of the system produced many unexpected results when testing scenes. Object and texture desynchronization between screens were common; loss of stereo 3D and image splitting and massive errors caused by shaders. Further, the nature of the CAVE being a large room means that troubleshooting cannot be done effectively from home, but rather attempts at fixes can be made and then tested at a later date. This very much slowed down troubleshooting and feature implementation as one could only test once or twice a week. Unsure of whether old problems had been fixed or if they stemmed from new features to implement. I feel that the Oculus has a massive advantage in this regard as one can build and test over the course of minutes; not waiting multiple days to see if one change or fix actually works. We tried to develop for the Oculus at the end of the project cycle, but we were unable to get a build working with that hardware. We were able to get something into the CAVE by cutting our project down the the bare minimum components that we knew functioned well in the CAVE.

The other principal hurdle was the loss of Madeline from the class and group at the end of October. One of the main reasons we chose to pursue the Space Elevator over other ideas was that it seemed to be the best at using all the group’s skills; this was especially pertinent to Madeline’s skills as an interior designer. This project was envisioned as having three main interior scenes that she would design and compose. When we lost her, we lost the majority of the 3D modeling and layout skills in our group. To overcome this Bryce had to take on those roles in addition to the other necessities of Unity.

We had planned to use video textures in Unity to make visualizations based on the Earth’s surface, but when it came time to try and implement this there were many issues getting it to work. After much troubleshooting in vain, it was discovered that even though the video texture objects exist and can be created in the free version of Unity 5, they do not actually work unless one has the Pro version of Unity 5. A maddening discovery which raised a sense of enmity against the Unity developers for withholding such a key feature with no clear disclosure. We figured out a way to overcome this by using scripting to swap textures on a target. Trevor decomposed our desired videos into the individual frames of the videos, and this resulted in a very large number of individual image files. Unfortunately, when it came time to import these into Unity, they always crashed the editor in the process. This lead us to ultimately cut this feature, which was very unfortunate.

How well did your project meet your original project description and goals?

Our group wasn’t able to meet our original project description very closely, though this was in a large part due to features that had to be cut out due to malfunctions when using them in the CAVE. Our group stayed on our timeline very well until the last week of November, after that things got off track. We missed our goal of having all group members familiar with Unity. Our narration/story got delayed because we were trying to build it around the visuals, but many of those kept breaking in the CAVE. This pushed back our ability to record a voice over and we instead switched to text to speech for the sake of time. Also many of the visual tweaks and flair that we wanted to add near the end got pushed off the board because Bryce had to focus on building and texturing the scenes as well as coming up with the scripting, animations, effects, etc.

If you had more time, what would you do next on your project?

If there was more time a build for Oculus would be the next big goal. This would allow us to add back in the features that worked well in development computers, but not in the CAVE, eg. nav mesh based animation, glass space elevator, and imagery. This would also allow for easier integration of user input as the CAVE’s wand turned out to be quite intricate to implement; if built for the Oculus a simple controller based interaction could be quickly implemented. Another goal would be to try and find a more stable way to implement the video textures. We had the video broken up into frames, but due to the very large number of individual files they crashed Unity on import.